AI That Earns the Right to Scale

Start small, prove value, then expand carefully

AI projects often begin with ambition. Teams want better forecasts, faster decisions, or less manual work. But the real question is not whether an idea sounds promising. The real question is whether the system is good enough to trust in a live environment, with real users, real exceptions, and real consequences.

That is why the first step should not be scale. It should be earning the right to scale.

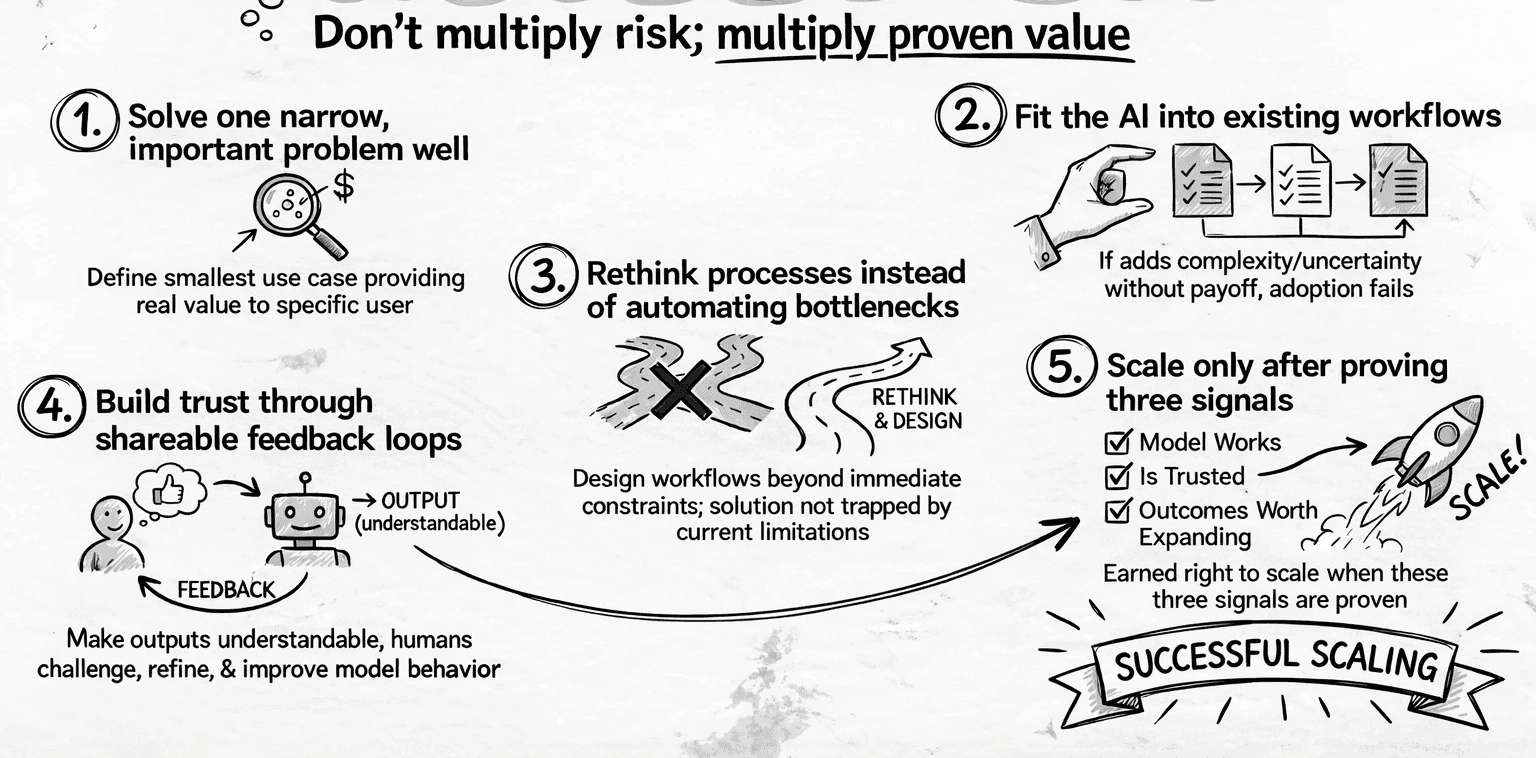

In practice, that means building something useful enough to demonstrate value, but narrow enough to manage. A first model does not need to solve everything. It needs to solve one important problem well enough that people can see the benefit, test the assumptions, and understand where it fits in the workflow. That early version becomes a working reference point, not just a slide deck or a proof of concept with no operational relevance.

This is especially important in industrial, municipal, and other operational settings. These environments already have established systems, routines, and responsibilities. AI only creates value when it fits into that reality. If it adds complexity, uncertainty, or extra manual checking without a clear payoff, adoption will stall. If it supports a real task, reduces friction, and gives people something they can use with confidence, momentum starts to build.

AI projects often begin with ambition. Teams want better forecasts, faster decisions, or less manual work. But the real question is not whether an idea sounds promising. The real question is whether the system is good enough to trust in a live environment, with real users, real exceptions, and real consequences.

That is why the first step should not be scale. It should be earning the right to scale.

In practice, that means building something useful enough to demonstrate value, but narrow enough to manage. A first model does not need to solve everything. It needs to solve one important problem well enough that people can see the benefit, test the assumptions, and understand where it fits in the workflow. That early version becomes a working reference point, not just a slide deck or a proof of concept with no operational relevance.

This is especially important in industrial, municipal, and other operational settings. These environments already have established systems, routines, and responsibilities. AI only creates value when it fits into that reality. If it adds complexity, uncertainty, or extra manual checking without a clear payoff, adoption will stall. If it supports a real task, reduces friction, and gives people something they can use with confidence, momentum starts to build.

A practical first step is to define the smallest version of the use case that still matters. What decision will it support? Who will use it? What data is available today? What is the acceptable level of error? These questions matter more than model sophistication in the early phase. They help separate a useful implementation from an interesting experiment.

Just as important is designing a process that is not bound to the current obstacle. Too often, teams automate around today’s limitation instead of rethinking the workflow itself. The better approach is to create a process that can work beyond the immediate bottleneck, so the solution is not trapped by the same constraint it was meant to remove. That requires a clear understanding of what technology can do, where its abilities are strong, and where its boundaries still require human judgment, integration, or fallback steps. Without that knowledge, organizations risk building a process that only works in one narrow condition and breaks as soon as the context changes.

The next step is to make the output shareable. That does not mean perfect. It means understandable, reviewable, and grounded enough that others in the organization can react to it. When people can see the model’s behavior, they can challenge it, refine it, and help improve it. That feedback loop is often what turns an AI idea into something real.

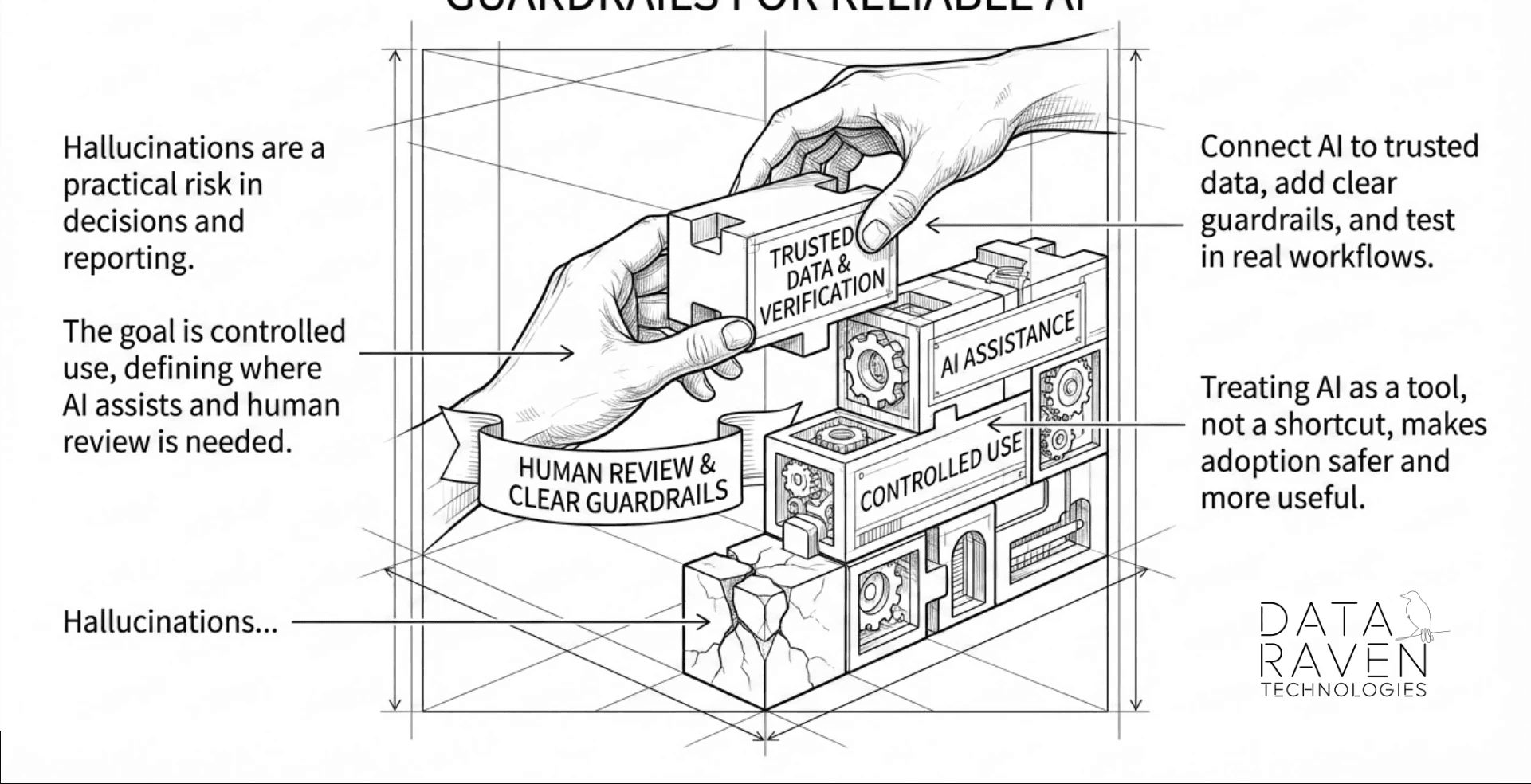

This is also where hallucinations and other reliability issues must be taken seriously. Large language models can be useful, but they still need verification, guardrails, and human oversight when the output matters. In many cases, the value is not in replacing judgment. It is in helping people work faster and with better information, while keeping control where it belongs.

A model earns the right to scale when it shows three things: it works in a real process, it is trusted by the people who use it, and it produces outcomes worth expanding. Without those signals, scaling is just multiplying risk. With them, scaling becomes a disciplined next step.

That is the practical path for AI adoption. Start with one real problem. Build something good enough to share. Learn from how it performs in context. Design the process so it is not limited by the current obstacle. Use a clear understanding of technology’s abilities and boundaries. Then decide, with evidence, whether it deserves to grow.

A practical first step is to define the smallest version of the use case that still matters. What decision will it support? Who will use it? What data is available today? What is the acceptable level of error? These questions matter more than model sophistication in the early phase. They help separate a useful implementation from an interesting experiment.

Just as important is designing a process that is not bound to the current obstacle. Too often, teams automate around today’s limitation instead of rethinking the workflow itself. The better approach is to create a process that can work beyond the immediate bottleneck, so the solution is not trapped by the same constraint it was meant to remove. That requires a clear understanding of what technology can do, where its abilities are strong, and where its boundaries still require human judgment, integration, or fallback steps. Without that knowledge, organizations risk building a process that only works in one narrow condition and breaks as soon as the context changes.

The next step is to make the output shareable. That does not mean perfect. It means understandable, reviewable, and grounded enough that others in the organization can react to it. When people can see the model’s behavior, they can challenge it, refine it, and help improve it. That feedback loop is often what turns an AI idea into something real.

This is also where hallucinations and other reliability issues must be taken seriously. Large language models can be useful, but they still need verification, guardrails, and human oversight when the output matters. In many cases, the value is not in replacing judgment. It is in helping people work faster and with better information, while keeping control where it belongs.

A model earns the right to scale when it shows three things: it works in a real process, it is trusted by the people who use it, and it produces outcomes worth expanding. Without those signals, scaling is just multiplying risk. With them, scaling becomes a disciplined next step.

That is the practical path for AI adoption. Start with one real problem. Build something good enough to share. Learn from how it performs in context. Design the process so it is not limited by the current obstacle. Use a clear understanding of technology’s abilities and boundaries. Then decide, with evidence, whether it deserves to grow.

All rights reserved to Data Raven Technologies