AI’s Current Frontier

AI’s Next Frontier Why judgment still matters AI keeps improving, and that matters.

AI’s Last Frontier

Why judgment still matters

AI keeps improving, and that matters. In many organizations, it is now realistic to use AI and analytics for tasks that once required significant manual effort: sorting information, spotting patterns, drafting outputs, and supporting decisions. But the most valuable lesson from recent progress is not that automation can replace everything. It is that some work still depends on context, judgment, and accountability in ways that technology cannot fully absorb.

This is especially clear in operational environments. In industrial settings and municipalities, decisions are rarely made from clean datasets alone. They depend on incomplete information, shifting priorities, local constraints, and tacit knowledge held by experienced people. AI can support those decisions, but it should not be treated as a substitute for understanding the process itself. The harder the environment, the more important it becomes to distinguish between tasks that can be automated and decisions that must remain human-led.

That distinction is where many AI initiatives succeed or stall. Too often, teams begin with the technology rather than the workflow. They ask what the model can do before asking what the business process needs, who will use the output, and how trust will be established. In practice, AI earns the right to scale only when it proves it can fit into real work. That means it must be accurate enough, explainable enough, and reliable enough to support action rather than create more uncertainty.

A gradual approach is usually the most credible one. Start with a bounded use case where the value is clear and the risks are manageable. Define the decision the system is meant to support. Identify the data sources that are actually available. Test how the output will be reviewed, challenged, and used. If the result improves speed, consistency, or visibility without disrupting operations, then there is a case for expanding. If it does not, the problem may be in the process, the data, or the expectations—not just the model.

This is also why trust is not a soft issue. In real organizations, people adopt tools when they understand them and see that they behave consistently. Black-box outputs may be acceptable in some low-stakes settings, but in operational work they often slow adoption. Teams need to know what the system is seeing, where uncertainty remains, and when human review is required. The goal is not perfect automation. The goal is dependable support for better decisions.

For leaders, the practical question is not whether AI can do more over time. It clearly can. The question is where it should be used now, under what conditions, and with what safeguards. That is how AI moves from experimentation to operational value: not by claiming every human skill can be automated, but by respecting the ones that still cannot.

AI’s Last Frontier

Why judgment still matters

AI keeps improving, and that matters. In many organizations, it is now realistic to use AI and analytics for tasks that once required significant manual effort: sorting information, spotting patterns, drafting outputs, and supporting decisions. But the most valuable lesson from recent progress is not that automation can replace everything. It is that some work still depends on context, judgment, and accountability in ways that technology cannot fully absorb.

This is especially clear in operational environments. In industrial settings and municipalities, decisions are rarely made from clean datasets alone. They depend on incomplete information, shifting priorities, local constraints, and tacit knowledge held by experienced people. AI can support those decisions, but it should not be treated as a substitute for understanding the process itself. The harder the environment, the more important it becomes to distinguish between tasks that can be automated and decisions that must remain human-led.

That distinction is where many AI initiatives succeed or stall. Too often, teams begin with the technology rather than the workflow. They ask what the model can do before asking what the business process needs, who will use the output, and how trust will be established. In practice, AI earns the right to scale only when it proves it can fit into real work. That means it must be accurate enough, explainable enough, and reliable enough to support action rather than create more uncertainty.

A gradual approach is usually the most credible one. Start with a bounded use case where the value is clear and the risks are manageable. Define the decision the system is meant to support. Identify the data sources that are actually available. Test how the output will be reviewed, challenged, and used. If the result improves speed, consistency, or visibility without disrupting operations, then there is a case for expanding. If it does not, the problem may be in the process, the data, or the expectations—not just the model.

This is also why trust is not a soft issue. In real organizations, people adopt tools when they understand them and see that they behave consistently. Black-box outputs may be acceptable in some low-stakes settings, but in operational work they often slow adoption. Teams need to know what the system is seeing, where uncertainty remains, and when human review is required. The goal is not perfect automation. The goal is dependable support for better decisions.

For leaders, the practical question is not whether AI can do more over time. It clearly can. The question is where it should be used now, under what conditions, and with what safeguards. That is how AI moves from experimentation to operational value: not by claiming every human skill can be automated, but by respecting the ones that still cannot.

Other articles that may interest you

AI Still Needs Human Judgment

AI Still Needs Human Judgment Some skills

AI’s Current Frontier

AI’s Next Frontier Why judgment still matters AI keeps improving, and that matters.

AI’s Current Frontier

AI’s Next Frontier Why judgment still matters AI keeps improving, and that matters.

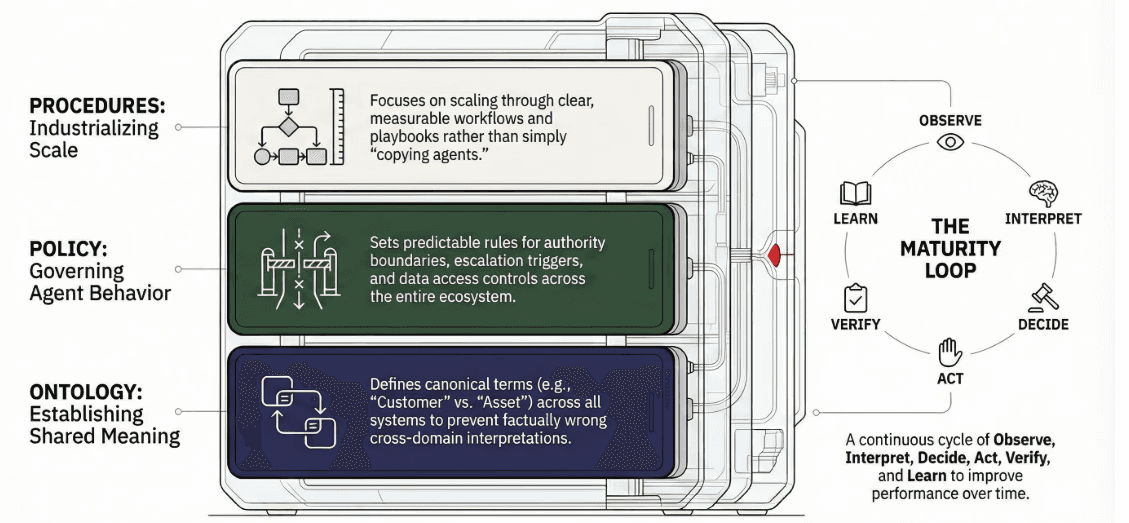

The Backbone of a Multi-Agent Organization

Policy and Ontology

All rights reserved to Data Raven Technologies