Before You Launch an AI Initiative in Manufacturing

Eight Questions Worth Answering First

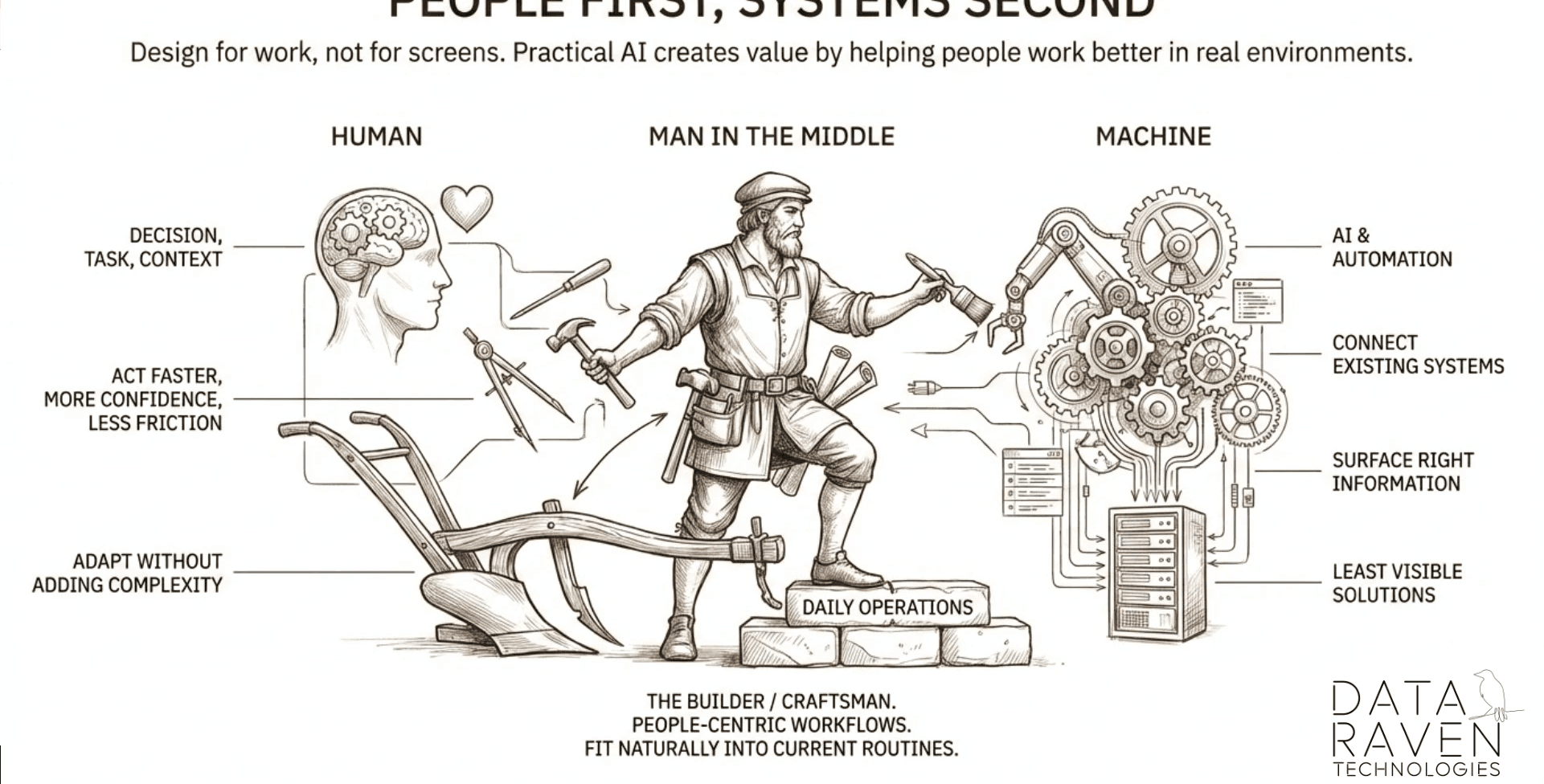

Any manufacturing AI initiative lives at the intersection of two realities: what the technology can genuinely deliver today, and what your organization is actually ready to absorb.

Getting either one wrong — overestimating capability or underestimating organizational friction — produces the same result: a pilot that never becomes production. Add to that an industry where vendor hype moves faster than enterprise readiness, and the risk is not just picking the wrong tool. It is building on assumptions that were already outdated when the project started.

There is a second consideration that often gets missed. The first AI initiative is not just a use case — it is infrastructure. Done right, your first agent becomes the foundation that makes every subsequent area faster, cheaper, and less risky to enter. The knowledge layer, governance model, and integration patterns you establish in step one are what allow the organization to expand — into new operational areas, new data sources, and new tooling — without rebuilding from scratch each time.

The question is not only: will this work? It is: will this scale into something bigger?

1. Do you know where your operational gains and losses actually reside?

Not where the loudest complaints are. Not where the last incident happened. The question is whether you have a clear, evidence-based view of where time, quality, and cost are being lost — and where a measurable improvement would actually change the business. Many organizations start with the most visible problem, not the most valuable one.

2. Are you starting where the impact spreads — not just where the problem is loudest?

The best first AI initiative is rarely the most urgent one. It is the one where a measurable improvement in one area creates downstream benefit across teams, processes, or decisions. Starting there builds internal momentum, proves the model, and makes the case for what comes next. Impact that spreads also makes the investment visible to more stakeholders.

3. Is your data captured, or just stored?

Stored data exists somewhere in the organization. Captured data is structured, labeled, consistent, and accessible. Most operational environments have the former. AI systems require the latter. The fastest programs treat data readiness as a deliverable — not an assumption — before design begins.

4. Who owns the decision the AI would be supporting?

AI does not make decisions in manufacturing — people do. If the person or team who owns the relevant decision is not involved from the start, the system will be built around the wrong criteria. Ownership also determines accountability when the AI is wrong, which it will be.

Any manufacturing AI initiative lives at the intersection of two realities: what the technology can genuinely deliver today, and what your organization is actually ready to absorb.

Getting either one wrong — overestimating capability or underestimating organizational friction — produces the same result: a pilot that never becomes production. Add to that an industry where vendor hype moves faster than enterprise readiness, and the risk is not just picking the wrong tool. It is building on assumptions that were already outdated when the project started.

There is a second consideration that often gets missed. The first AI initiative is not just a use case — it is infrastructure. Done right, your first agent becomes the foundation that makes every subsequent area faster, cheaper, and less risky to enter. The knowledge layer, governance model, and integration patterns you establish in step one are what allow the organization to expand — into new operational areas, new data sources, and new tooling — without rebuilding from scratch each time.

The question is not only: will this work? It is: will this scale into something bigger?

1. Do you know where your operational gains and losses actually reside?

Not where the loudest complaints are. Not where the last incident happened. The question is whether you have a clear, evidence-based view of where time, quality, and cost are being lost — and where a measurable improvement would actually change the business. Many organizations start with the most visible problem, not the most valuable one.

2. Are you starting where the impact spreads — not just where the problem is loudest?

The best first AI initiative is rarely the most urgent one. It is the one where a measurable improvement in one area creates downstream benefit across teams, processes, or decisions. Starting there builds internal momentum, proves the model, and makes the case for what comes next. Impact that spreads also makes the investment visible to more stakeholders.

3. Is your data captured, or just stored?

Stored data exists somewhere in the organization. Captured data is structured, labeled, consistent, and accessible. Most operational environments have the former. AI systems require the latter. The fastest programs treat data readiness as a deliverable — not an assumption — before design begins.

4. Who owns the decision the AI would be supporting?

AI does not make decisions in manufacturing — people do. If the person or team who owns the relevant decision is not involved from the start, the system will be built around the wrong criteria. Ownership also determines accountability when the AI is wrong, which it will be.

5. What does a wrong answer cost you?

Every AI system produces errors. The question is what happens when it does. In some contexts, a wrong answer is a minor inconvenience. In others, it triggers a production halt, a safety event, or a customer complaint. Understanding the cost of failure shapes how much validation, human oversight, and fallback logic the system needs — before you build it.

6. Have you defined what 'working' looks like before you build?

A system that impresses in a demo can still fail in production. Without specific, agreed-upon success criteria — tied to real operational outcomes, not technical benchmarks — there is no objective way to evaluate readiness. Define what good looks like, how you will measure it, and who signs off. Do this before design begins.

7. Do you know how to capture the real complexity of the solution you are considering?

The gap between a compelling AI concept and a production-grade system is almost always larger than it appears. Integration points, data dependencies, edge cases, exception handling, security boundaries, and user behavior under pressure all add complexity that is invisible in early planning. Organizations that underestimate this tend to discover it in UAT — when changing it is expensive.

8. In a market driven by constant hype, are you managing AI adoption as a technology discipline — not just a strategic ambition?

The pressure to adopt AI is real. So is the speed at which vendor capabilities, model costs, and architectural best practices are changing. A decision made on today's hype cycle may be technically outdated before it reaches production. Managing AI adoption as a discipline means evaluating objectively, building incrementally, and maintaining the flexibility to adapt — regardless of what the market is excited about this quarter.

A closing note

These questions are not a checklist. They are a structure for an honest conversation — internally, with your team, or with a partner who understands manufacturing operations. The goal is not to find reasons not to proceed. It is to proceed with clarity about what you are building, why it matters, and what it will take.

Organizations that answer these questions before they launch do not avoid difficulty. They avoid the specific kind of difficulty that comes from discovering the answers too late.

5. What does a wrong answer cost you?

Every AI system produces errors. The question is what happens when it does. In some contexts, a wrong answer is a minor inconvenience. In others, it triggers a production halt, a safety event, or a customer complaint. Understanding the cost of failure shapes how much validation, human oversight, and fallback logic the system needs — before you build it.

6. Have you defined what 'working' looks like before you build?

A system that impresses in a demo can still fail in production. Without specific, agreed-upon success criteria — tied to real operational outcomes, not technical benchmarks — there is no objective way to evaluate readiness. Define what good looks like, how you will measure it, and who signs off. Do this before design begins.

7. Do you know how to capture the real complexity of the solution you are considering?

The gap between a compelling AI concept and a production-grade system is almost always larger than it appears. Integration points, data dependencies, edge cases, exception handling, security boundaries, and user behavior under pressure all add complexity that is invisible in early planning. Organizations that underestimate this tend to discover it in UAT — when changing it is expensive.

8. In a market driven by constant hype, are you managing AI adoption as a technology discipline — not just a strategic ambition?

The pressure to adopt AI is real. So is the speed at which vendor capabilities, model costs, and architectural best practices are changing. A decision made on today's hype cycle may be technically outdated before it reaches production. Managing AI adoption as a discipline means evaluating objectively, building incrementally, and maintaining the flexibility to adapt — regardless of what the market is excited about this quarter.

A closing note

These questions are not a checklist. They are a structure for an honest conversation — internally, with your team, or with a partner who understands manufacturing operations. The goal is not to find reasons not to proceed. It is to proceed with clarity about what you are building, why it matters, and what it will take.

Organizations that answer these questions before they launch do not avoid difficulty. They avoid the specific kind of difficulty that comes from discovering the answers too late.

All rights reserved to Data Raven Technologies